Do you remember Microsoft’s Twitter chatbot back in 2016? It started as an innocent bot, turned AI Nazi in less than a day. Tay was a natural language processing (NLP) project gone wrong. Built under an unsupervised learning model, the bot learned based on the data it collects from people. Unfortunately, Tay became a Hitler-loving and feminist-bashing troll, due to copying its 50,000 ill-intentioned followers.

The difference between NLP vs NLU

First-generation NLP suffers from low natural language understanding (NLU), and that’s the problem. Unlike NLU which take things into context, NLP focuses on the understanding of human language based on a rule-based model.

Otherwise known as sentiment analysis, this branch of AI can better understand human intent without formalized syntax. This means that applying NLU not only enable chatbots to understand customer needs. It can also recognize the voice tone, facial expression and even spelling mishaps of humans.

Let’s look back. Microsoft could prevent Tay for being a crazy Nazi if its NLP model has a deep learning algorithm on sentiment analysis. Here are the best practices to keep in mind – so you won’t have to cancel an expensive AI project and switch your Twitter to private, forever.

First, make contextual understanding a mandatory feature

Natural-language and contextual search through deep learning makes semantic search possible. Products such as WorkFusion Chatbots enable a rich machine to a human conversation through moving to an intent-based approach.

For example, WorkFusion Chatbots can parse natural human queries such as typical why, how what questions and extract relevant keywords and entities. This, in turn, will be analyzed and interpreted through an API to understand concepts, categories, and even emotions. Through fusing natural language understanding with NLP, chatbots can give customers with timelier and personalized service.

Second, invest in metadata management

Invest in enterprise data management to use both structured and unstructured data to harvest valuable customer insights. This is a top Gartner recommendation. But, as information is spread across disparate database, social media accounts, and documents, it is a challenge to know which data is reliable.

Through metadata, individual information can be linked and aligned to derive insights from varying datasets. Automated data discovery and crowdsourcing makes this possible as glossaries and dictionaries can be built with minimal human assistance. This metadata, in turn, makes it easier for NLP algorithms to run its insight driven process.

Third, switch to a proactive bot

Switch to a more proactive bot to pre-emptively know what a customer needs. This is another best practice in natural language processing. More than a billion people have access to Siri and Google Now. Because of this, the notion of virtual assistants is no longer far from mainstream.

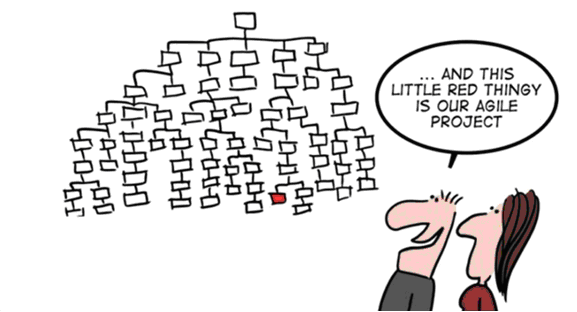

Robotic Process Automation (RPA) products such as WorkFusion RPA, can empower proactive bots to follow the rules and execute commands that are typically done by a human. WorkFusion RPA Express is a free product that companies can try to automate web, desktop, and terminal applications. Combining RPA with NLP can provide rich and useful experience to customers simulating a natural interaction with human customer support.Finally, create a minimum viable product (MVP) using a Validated Learning approach

Switching to an NLP and NLU focused chatbot or insight engine can pose a lot of risks – just ask Microsoft! However, to address a lot of these unknowns, it is best to start with a pilot. Choose a small project and create a minimum viable product.

An MVP is an application with limited features but is decent enough to be used by people to gather early feedback. Fusing this with Eric Ries’ Validated Learning approach, your MVP should have metrics that would measure the success of your NLP model.

Measuring the NLP Model

I found the following machine learning indicators especially useful to arrive at a build-measure-learn cycle. This means, as you develop and train your natural language processing model, you must regularly measure their performance through these key performance indicators (KPIs). This will tell if you’re on the right track.

- Sensitivity (also known as Recall)

- The probability of a machine learning model of getting a true positive. For example, it is the ability to correctly identify entities related to a natural human question.

- Formula: TP / (TP +FN)

- Specificity

- The Probability of a machine learning model getting a true negative. Such as the ability to correctly reject entities related to natural human questions.

- Formula: TN / (TN+FP)

- Precision (also known as the Positive Predictive Value or PPV)

- The fraction of retrieved documents that are relevant to the query.

- Formula: TP/(TP+FP)

- Negative Predictive Value (NPV)

- The fraction of retrieved documents that are not relevant to the query.

- Formula: TN/(TN+FN)

Legend:

- TP- True Positive

- TN-True Negative

- FN-False Negative

- TN-True Negative

Key Takeaway

Start with something small and work your way up. Natural language processing models are meant to mature through time. With a Validated Learning approach and low-risk MVP, carefully improve your model’s contextual understanding through enhancing its metadata and widening its database integrations. Combining NLP with other machine learning technologies such as robotics process automation could be the next phase of chatbots. Virtual assistants through RPA is definitely the ideal state of chatbot evolution. Not only it will improve customer satisfaction but also improve business process efficiency.